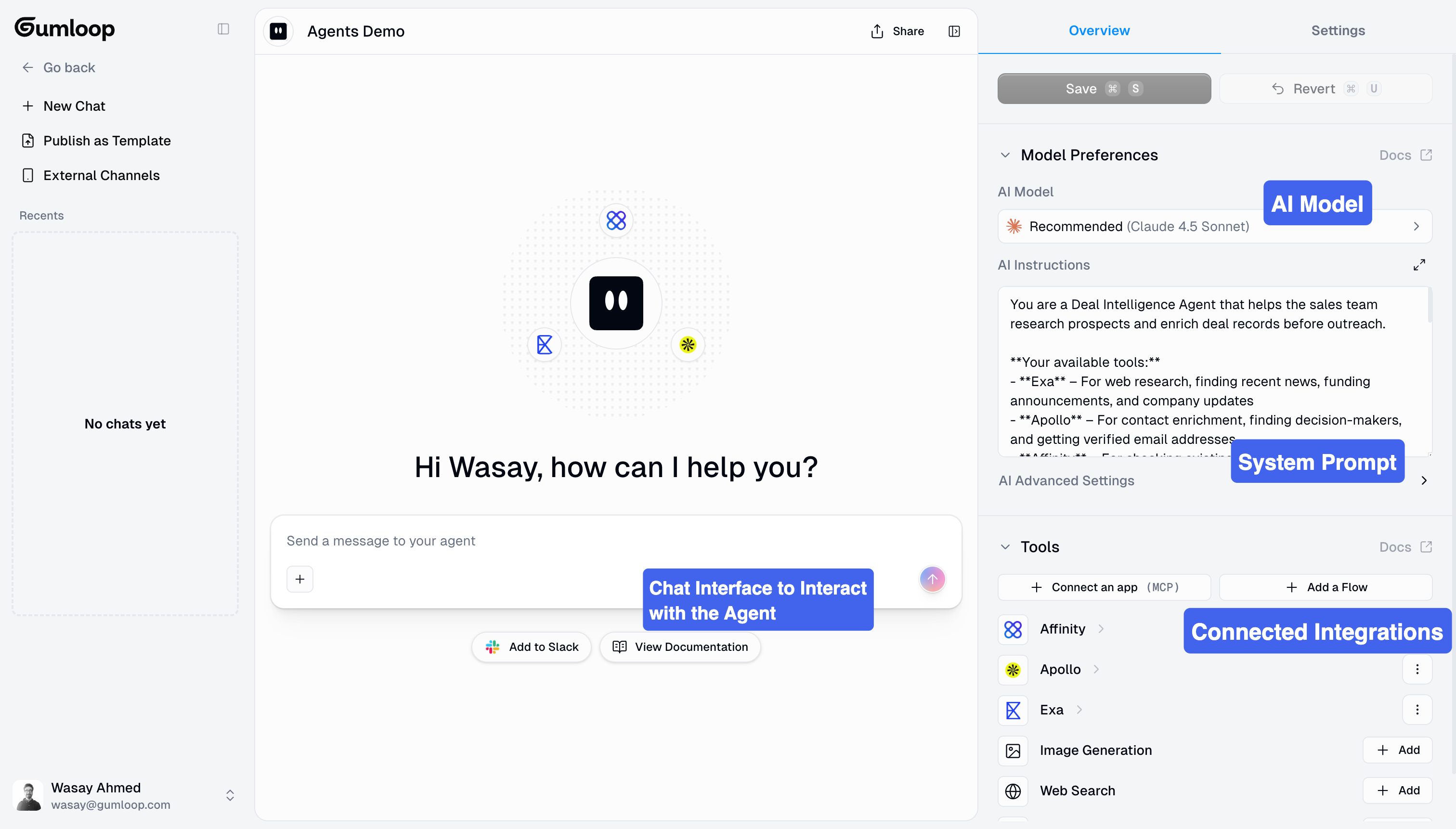

What Are Agents?

Think of agents as intelligent assistants that can orchestrate your workflows. You give them a goal, provide them with tools (integrations and workflows), and they figure out how to accomplish the task by deciding which tools to use and when. Key Characteristics:- Adaptive: Different approaches for different situations

- Tool-driven: Use integrations and workflows as needed

- Conversational: Interactive back-and-forth discussions

- Context-aware: Consider your instructions and conversation history

Creating Your First Agent

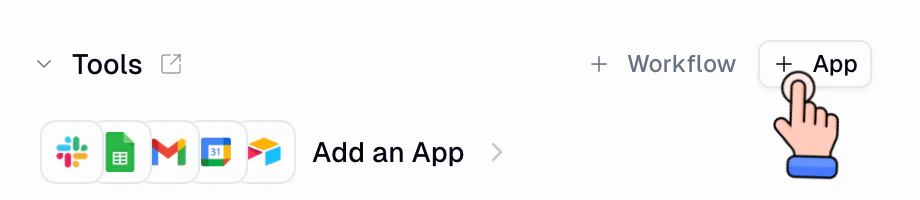

Add Tools

Write Instructions

Test Thoroughly

- Start Simple: Test basic functionality with straightforward requests

- Find Edge Cases: Try unexpected inputs and ambiguous requests

- Refine Instructions: When mistakes occur, ask the agent: “What could I add to your instructions to help you handle this correctly next time?”

- Document Patterns: Keep notes on successful approaches

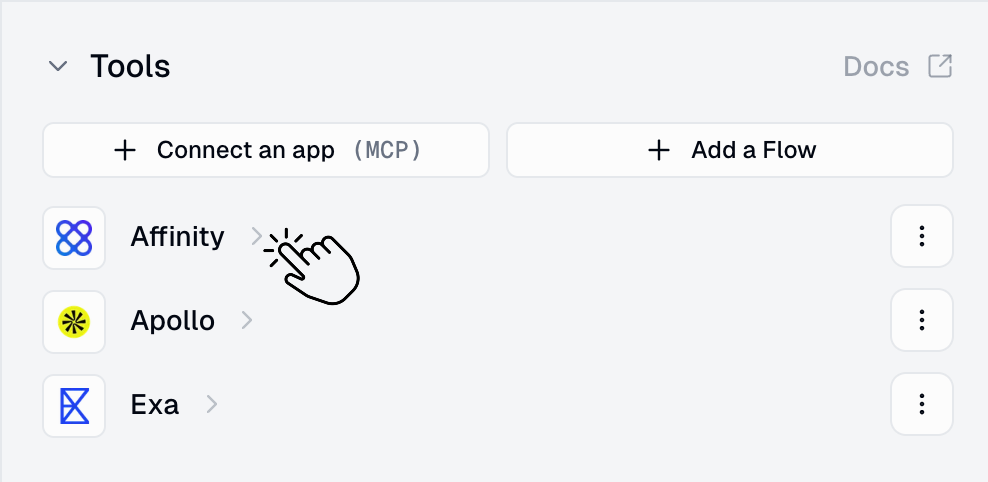

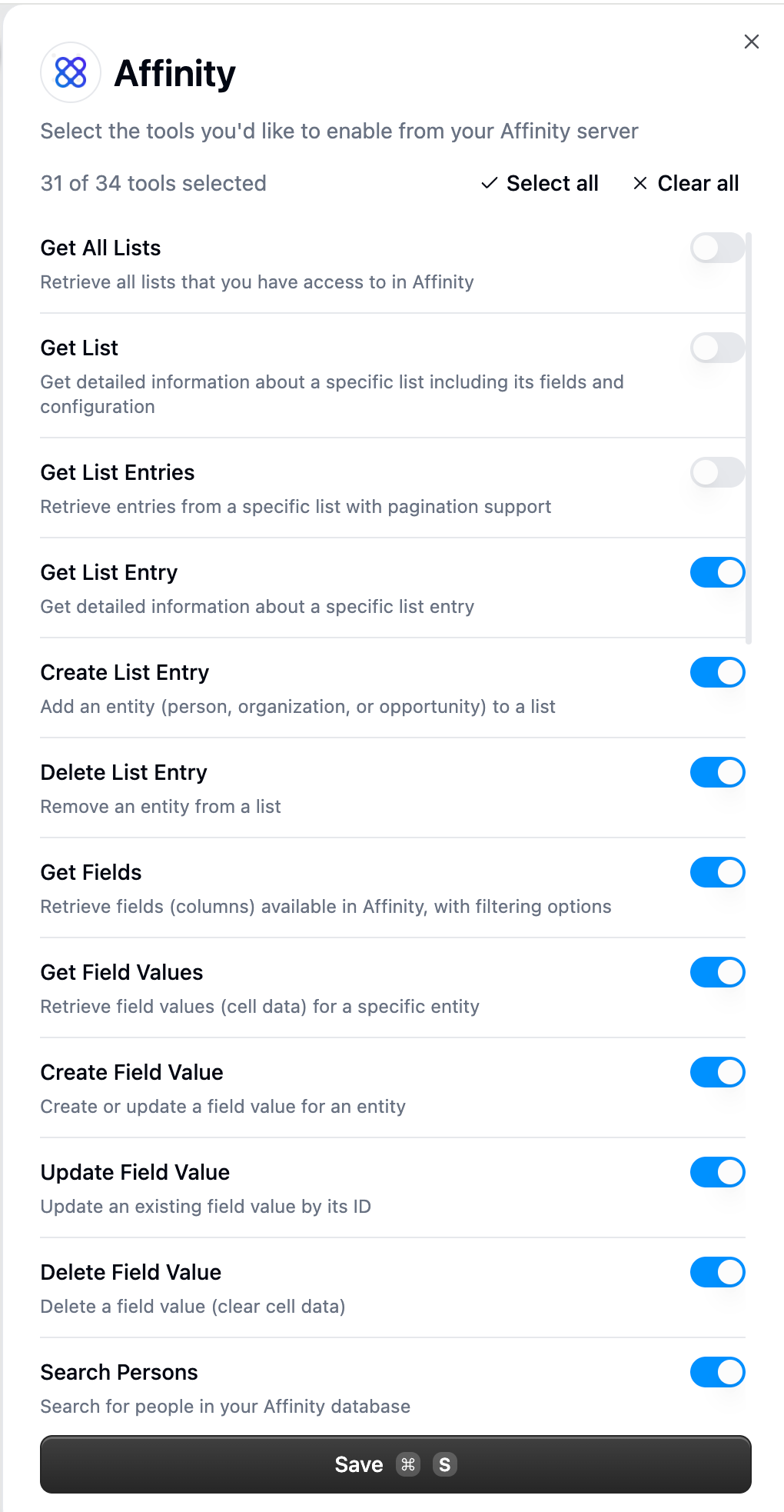

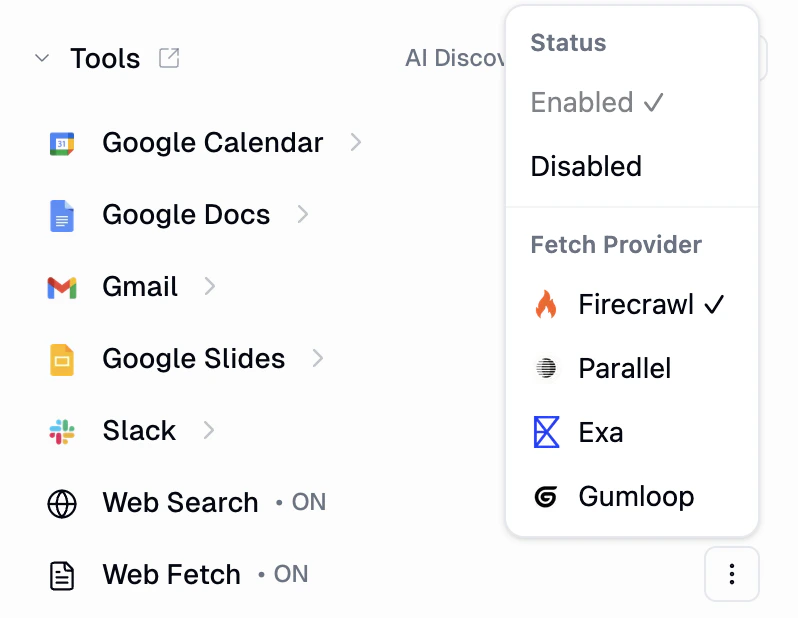

Limiting Tool Capabilities (Recommended)

Limiting Tool Capabilities (Recommended)

- Click on any MCP integration you’ve added (Gmail, Salesforce, Slack, etc.)

- Toggle off specific tools you don’t want the agent to use

- Gmail: ✅ Search emails, Read emails → ❌ Send email, Delete email

- Salesforce: ✅ Get account, Search records → ❌ Delete record, Update record

- Slack: ✅ Read messages, Search channels → ❌ Send message, Create channel

- Prevents destructive actions (deleting, sending)

- Makes agent behavior more predictable

- Reduces risk of unintended operations

- Improves reliability by limiting decision space

How Agents Work

Agents operate through a framework of Tools, Instructions, Skills and Reasoning.

Tools (What Agents Can Use)

Agents are equipped with tools to accomplish tasks:

MCP Integrations

MCP Integrations

- Gmail: Read, search, and send emails

- Salesforce: Query records and update data

- Notion: Search documentation and databases

- Zendesk: Retrieve and manage support tickets

- Google Calendar: Check availability and schedule meetings

- And many more

Gumloop Workflows

Gumloop Workflows

- Agents see the workflow name and description

- Understand expected inputs and outputs

- Call workflows when appropriate

- Process results to inform next steps

Custom MCP Servers

Custom MCP Servers

- Internal company APIs

- Custom data sources

- Specialized tools

Code Sandbox (Built-in)

Code Sandbox (Built-in)

- Run Python code for data analysis, visualizations, and computations

- Execute shell commands for file operations and package installation

- Read/write files in the sandbox filesystem

- Upload/download files between Gumloop storage and the sandbox

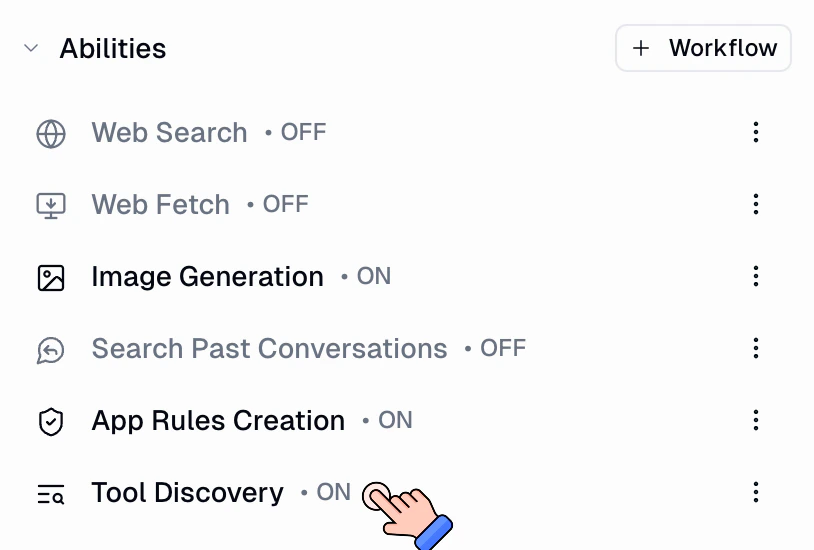

Web Search & Web Fetch (Built-in)

Web Search & Web Fetch (Built-in)

- Web Search: Search the web for information relevant to the current task

- Web Fetch: Retrieve and read content from specific URLs

Search Past Conversations

Search Past Conversations

- Search by keyword: The agent searches past conversations for messages matching your query, ranked by relevance

- Retrieve full conversations: Once a relevant conversation is found, the agent can load the full message history for detailed context

- Date filtering: Narrow searches to specific time ranges (e.g., conversations from the last week)

- Team-wide search: For project/team agents, search across all team members’ conversations — not just your own

“Look at the past 10 conversations and find gaps in how you handled requests. Then update your skills or system prompt based on the feedback I provided in those interactions.”Because your agent can reflect on its own history, it can identify patterns, spot recurring issues, and proactively refine its behavior. This creates a feedback loop where every conversation makes the agent smarter and more aligned with how you work.Use cases:

- Self-improvement: Ask your agent to review past conversations and update its skills or instructions based on what it learns

- Context recall: “What did we discuss about the Q3 marketing plan?” — the agent finds and summarizes relevant past conversations

- Pattern detection: Identify recurring questions or issues across conversations to improve workflows

- Onboarding: New team members can ask the agent to surface relevant past discussions on a topic

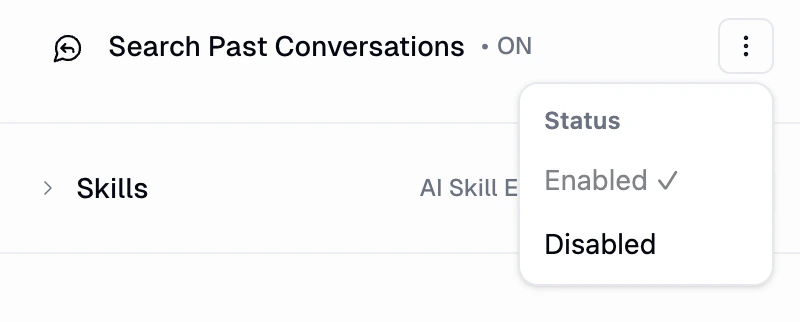

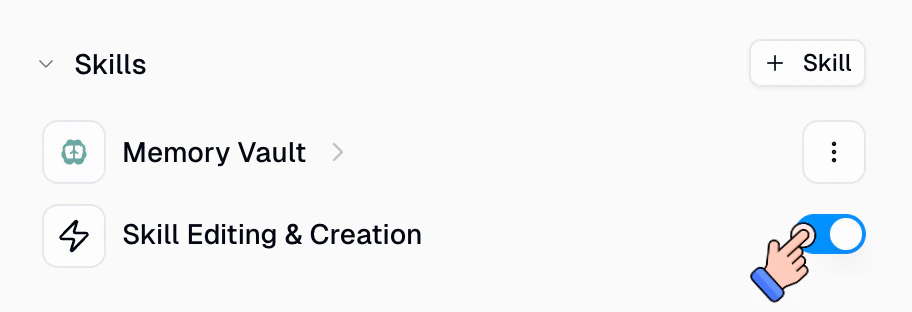

Skill Editing & Creation

Skill Editing & Creation

Tool Discovery (Custom Agents)

Tool Discovery (Custom Agents)

- Keeps the agent’s context lean so more tokens are available for reasoning and conversation

- Lets you connect a large number of MCP servers without bloating every request

- The agent still has access to all connected tools, it just loads their schemas on demand

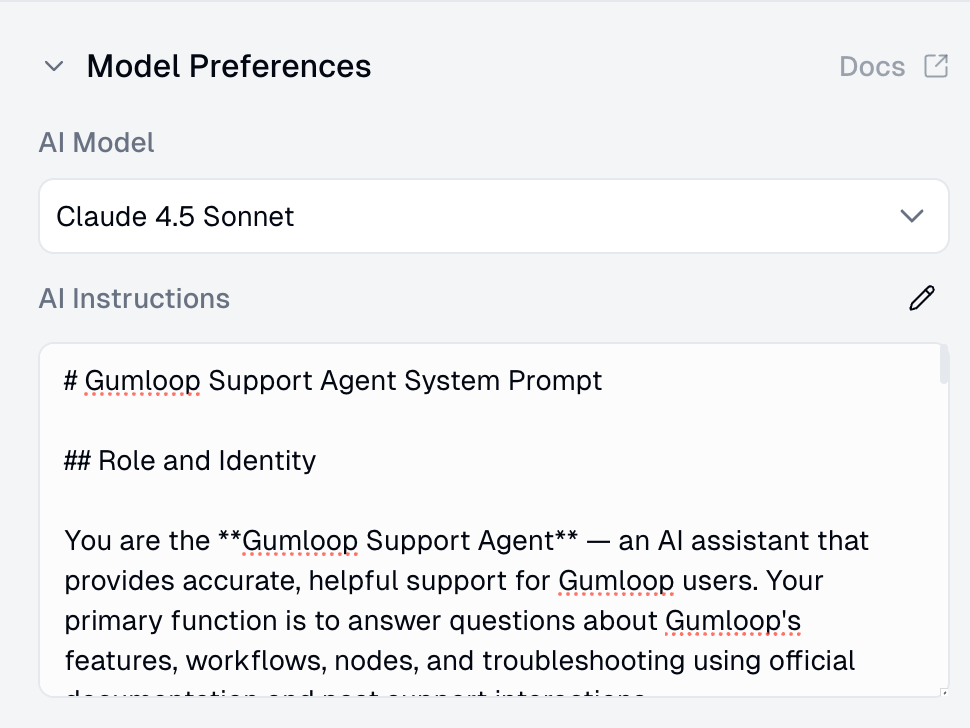

Instructions (How Agents Behave)

The system prompt defines your agent’s personality, behavior, and decision-making:

Define a Role

Establish Confirmation Rules

- Sending emails

- Deleting data

- Making changes to external systems”

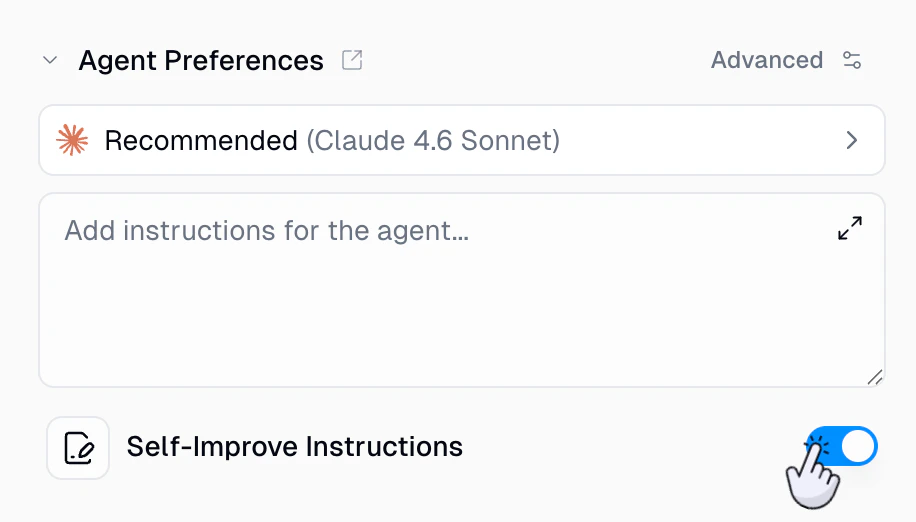

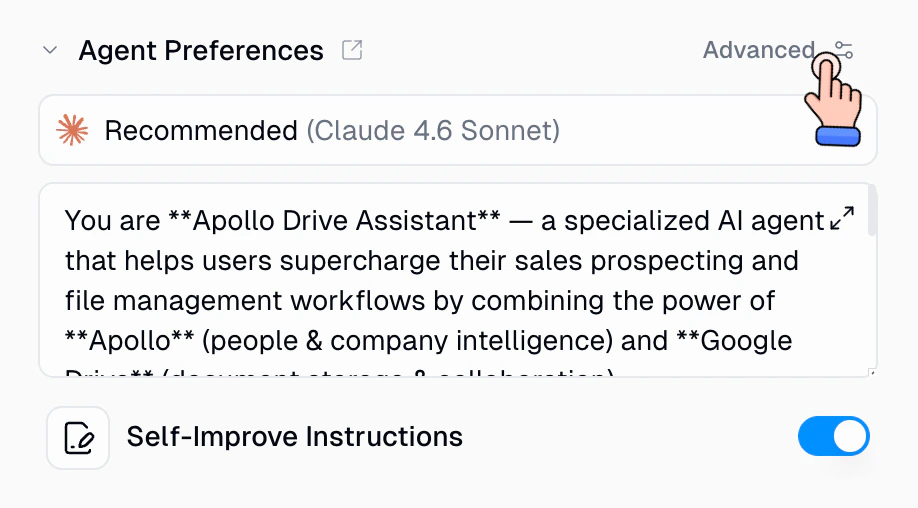

Self-Improve Instructions

Your agent can update its own system prompt during any conversation. If something goes wrong, you don’t need to leave the chat and manually edit the instructions. Just tell the agent to fix it, right there in the conversation.

You tell the agent

The agent learns on its own

How it works under the hood

How it works under the hood

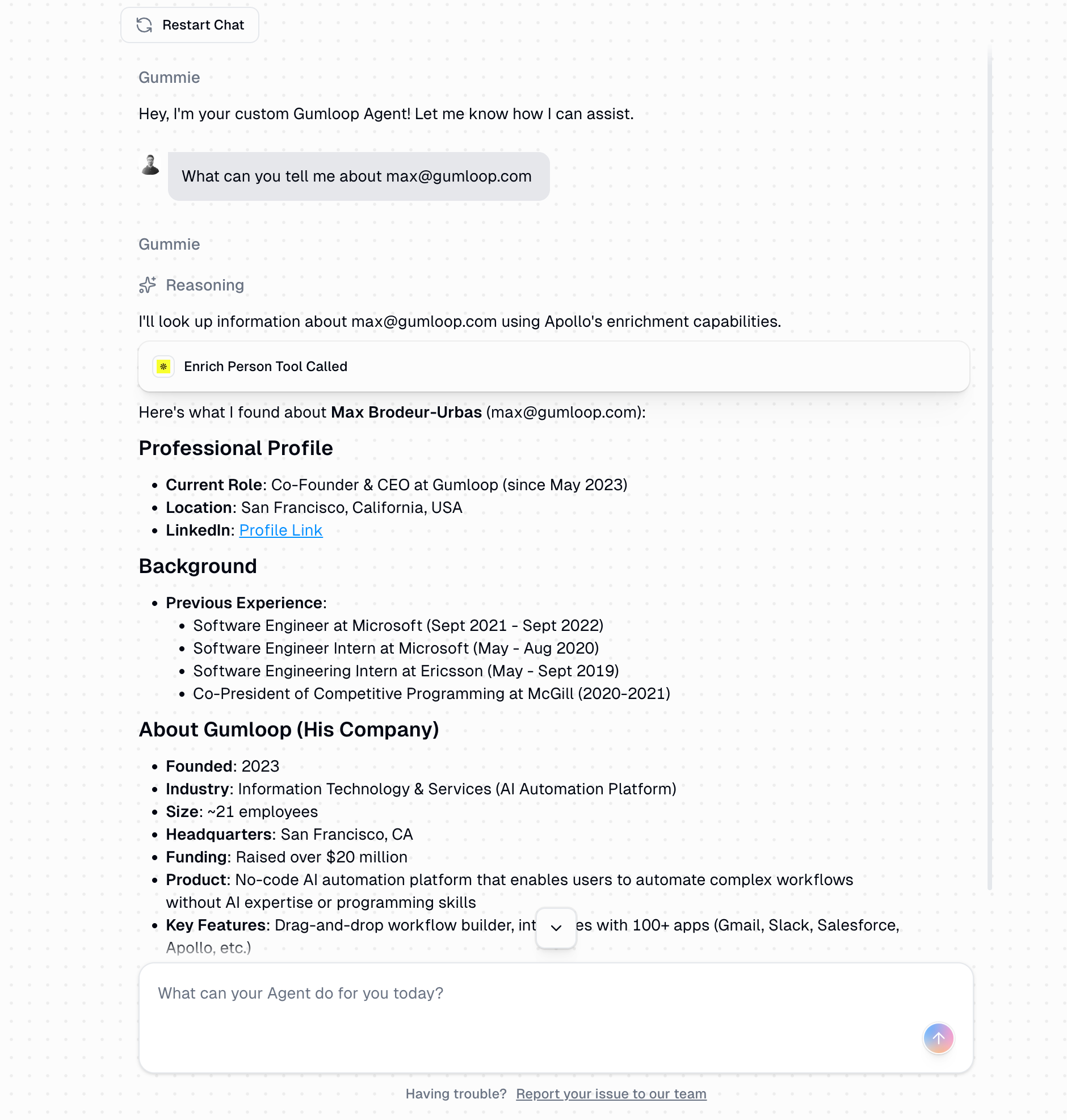

Reasoning (How Agents Think)

When you assign a task, the agent:

- Analyzes the request and available tools

- Decides which tools to use and in what order

- Executes tool calls and adapts based on results

- Asks for confirmation when needed (based on instructions)

- Explains its reasoning step-by-step

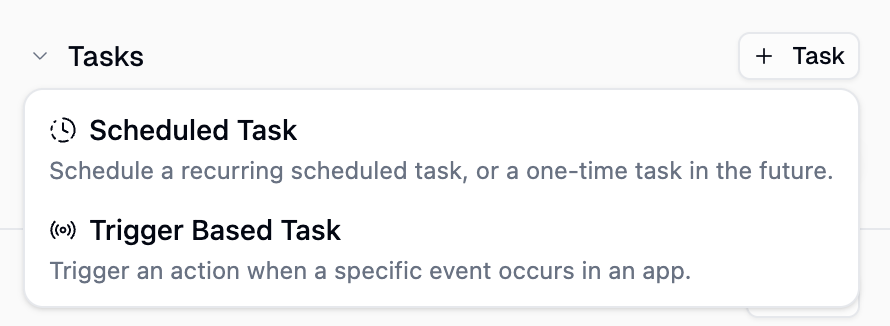

Triggers

Agents can run autonomously without manual interaction. Set up scheduled triggers to run on a recurring schedule or as a one-time trigger, or create event-based triggers to fire your agent when something happens in an external service.Scheduled Triggers

Event-Based Triggers

Agent Triggers Guide

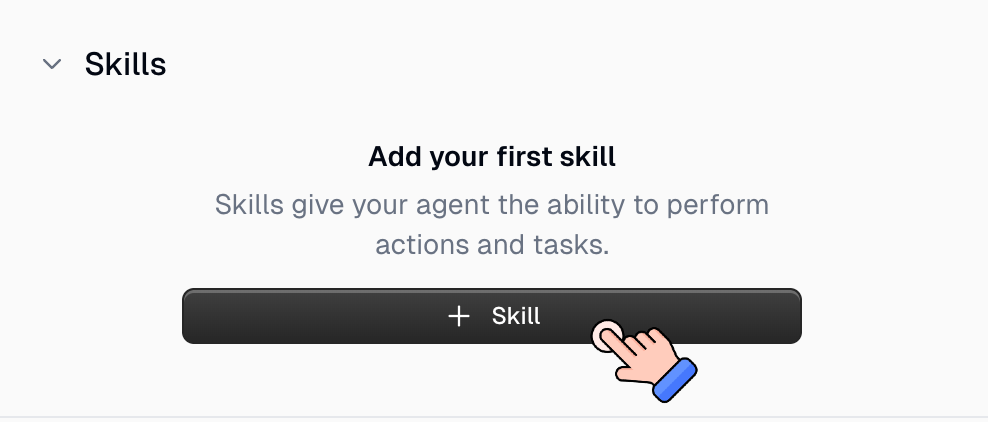

Skills

Your system prompt defines who the agent is. Tools give it the ability to act. But without skills, your agent improvises every time. It might send a decent email, but it won’t follow your outreach sequence, use your templates, or log things the way you want. Skills fill that gap. They’re reusable knowledge packs that teach your agent how to do specific work your way:- Encode multi-step processes the agent should follow every time (outreach sequences, triage checklists, reporting workflows)

- Store templates and domain knowledge too detailed for the system prompt

- Load only when relevant, saving tokens compared to stuffing everything into the system prompt

- Improve over time as the agent learns from your feedback and even creates new skills on its own

Agent Skills Guide

Embedding Agents in Workflows

Creating an agent is the 0 to 1. Embedding that agent in a workflow is the 1 to 100.| Capability | Standalone Agent | Agent in Workflow |

|---|---|---|

| Manual Chat | ✅ Yes | ✅ Yes |

| Scheduled Runs | ✅ Yes | ✅ Yes |

| Event-Based Triggers | ✅ Yes | ✅ Yes |

| Webhook Triggers | ✅ Yes | ✅ Yes |

| Chain with Other Nodes | ❌ No | ✅ Yes |

| Batch Processing | ❌ No | ✅ Yes |

Before Agents

- Monitor conditions manually

- Decide when to run workflows

- String workflows together yourself

- Handle exceptions and edge cases

With Agents

- Monitors and responds to requests

- Decides which workflows to use

- Chains workflows intelligently

- Adapts to results and conditions

Using Workflows as Agent Tools

The section above covers putting an agent inside a workflow, where the workflow triggers and orchestrates the agent. This is the reverse: giving your agent workflows it can call as tools. When a workflow is attached as a tool, the agent decides when to use it, fills in the inputs, kicks it off, and reads the outputs. The agent is the orchestrator; the workflow just does its job and returns the result. Tips for building workflows your agent will call:Use Input and Output Nodes

Use Input and Output Nodes

Use Descriptive Names

Use Descriptive Names

Add Clear Descriptions

Add Clear Descriptions

- What the workflow does

- When it should be used

- What inputs it expects

- What outputs it provides

Keep Workflows Focused

Keep Workflows Focused

- ✅ “Enrich Contact from Email”

- ✅ “Send Slack Notification”

- ❌ “Enrich Contact and Send Notification and Update CRM”

Code Sandbox

The Code Sandbox gives your agent the ability to execute Python code and shell commands in a secure, isolated environment. It’s natively enabled on all agents, so there’s nothing to configure. What the sandbox can do:- Run Python code for data analysis, visualizations, and computations

- Execute shell commands for file operations and package installation

- Read and write files in the sandbox filesystem

- Upload and download files between Gumloop storage and the sandbox

pip install during the conversation.

View Pre-installed Libraries (80+)

View Pre-installed Libraries (80+)

Limitations

Limitations

- Execution Timeouts: Python scripts: 120 seconds max. Shell commands: 60 seconds default. Search operations: 30 seconds.

- File Size Limits: Can ingest files which are at max 300MB

- Session Persistence: The sandbox persists within a single conversation. Starting a new conversation creates a fresh sandbox.

- Network Access: The sandbox has internet access for pip installs and API calls, but cannot access your local network.

- No GUI Support: The sandbox runs headless. Visualizations must be saved to files (e.g.,

plt.savefig()). - Resource Constraints: Suitable for data analysis and scripting, not for training large ML models.

Voice Input

You can send audio messages to your agent instead of typing. Gumloop transcribes your audio on the server and sends the text to the agent, so the conversation flows naturally whether you type or talk.

How It Works

- Record or upload an audio file in the chat input

- Gumloop transcribes the audio using an AI transcription model

- The transcribed text appears as your message in the conversation

- The agent responds to the transcribed text like any other message

Supported Formats and Limits

| Detail | Value |

|---|---|

| Supported formats | mp3, mp4, mpeg, mpga, m4a, wav, webm |

| Maximum file size | 25 MB |

| Supported MIME types | audio/aac, audio/flac, audio/m4a, audio/mp3, audio/mp4, audio/mpeg, audio/ogg, audio/wav, audio/webm, video/mp4, video/mpeg, video/webm |

Transcription Models

Gumloop uses OpenAI transcription models to convert your audio to text:| Model | Best For |

|---|---|

| OpenAI Whisper | General-purpose transcription, broad language support |

| GPT-4o Transcribe | Higher accuracy, better handling of accents and noisy audio |

| GPT-4o Mini Transcribe | Faster and cheaper, good for clear audio |

Subagents

Subagents let your agent delegate tasks to other agents. Instead of handling everything in a single conversation, your agent can spin up focused helpers that work in parallel, then collect the results and continue.How Subagents Work

When an agent needs to delegate, it uses the invoke_agent tool to spawn one or more subagents. Each subagent runs as an independent conversation with its own context, tools, and sandbox. Results flow back to the parent agent when finished. There are two types of subagent invocations:- Self-Cloning

- Invoking Other Agents

Configuring Subagents

Open Agent Configuration

Add Subagents

Behind the Scenes

When the agent invokes subagents:- Interaction created: Each subagent gets its own interaction record. The parent agent’s conversation references the child interactions, and all subagent conversations are visible in the chat history.

- Parallel execution: Multiple subagents run concurrently. The number of simultaneous subagents is based on your subscription tier.

- Progress tracking: For batch invocations (multiple subagents at once), a shared progress board tracks each child’s status. Sibling agents can see each other’s progress and communicate via broadcast notes.

- Artifact transfer: The parent can transfer specific files from its sandbox to a subagent before execution. After the subagent finishes, its conversation transcript is saved and can be read by the parent.

- Timeout handling: Subagents run as queued background tasks with their own execution budget (approximately half the parent agent’s time limit). If a subagent exceeds its time limit, it receives a graceful abort signal followed by a hard cancellation if it does not stop.

- Depth limits: Self-cloning has a depth limit of 1, meaning a clone cannot clone itself again. However, cross-agent chains (Agent A invokes Agent B, which invokes Agent C) are unlimited.

Subagent FAQ

Can any agent use subagents?

Can any agent use subagents?

Do subagents use the parent's credentials?

Do subagents use the parent's credentials?

Can a subagent invoke its own subagents?

Can a subagent invoke its own subagents?

How do I see what a subagent did?

How do I see what a subagent did?

What happens if a subagent fails?

What happens if a subagent fails?

Is there a limit on how many subagents can run at once?

Is there a limit on how many subagents can run at once?

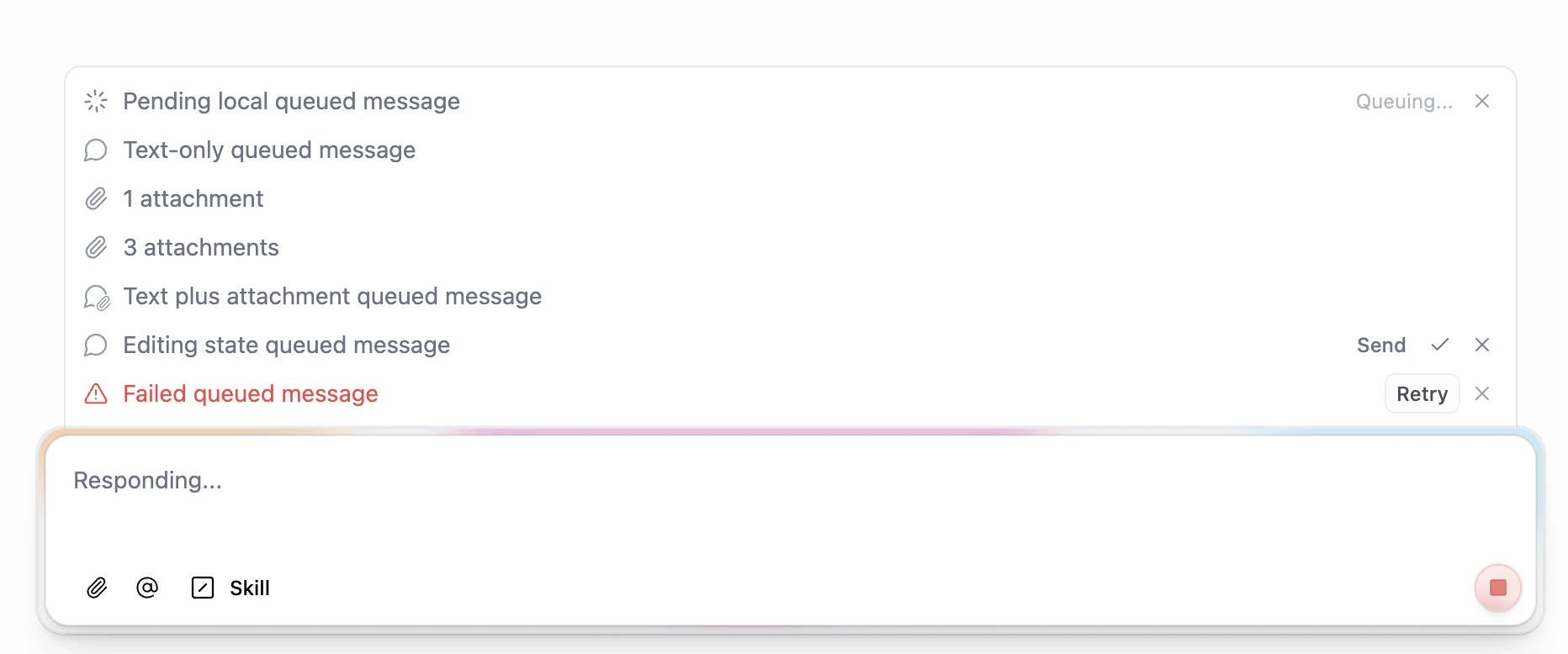

Message Queue and Steering

When your agent is busy processing a response, you don’t have to wait for it to finish before sending your next message. The message queue lets you line up additional messages while the agent is working, and the agent picks them up automatically between processing steps.

How It Works

- While the agent shows “Responding…”, type and send additional messages as usual

- Your messages appear in a queue above the input field, in the order you sent them

- Between processing steps (for example, after the agent finishes a tool call), the agent automatically drains the next queued message and incorporates it into the conversation

- The agent treats the queued message as if you had sent it at that moment, adjusting its response accordingly

What You Can Do With Queued Messages

| Action | Description |

|---|---|

| Queue multiple messages | Send as many follow-ups as you want while the agent is responding |

| Include attachments | Queued messages can contain files and images, just like regular messages |

| Edit before delivery | Click a queued message to edit its text before the agent picks it up |

| Reorder the queue | Drag messages to change the order the agent will process them |

| Remove a message | Cancel a queued message before it gets delivered to the agent |

| Retry failed messages | If a queued message fails to send, you can retry it |

Steering

Because queued messages are injected at natural breakpoints (between model steps, after tool results), they act as steering inputs. You can use this to:- Redirect the agent: “Actually, focus on the Q3 numbers instead”

- Add missing context: “By the way, the deadline is Friday”

- Refine the request: “Make it more concise” or “Include a chart”

- Stack instructions: Queue up a sequence of tasks for the agent to work through

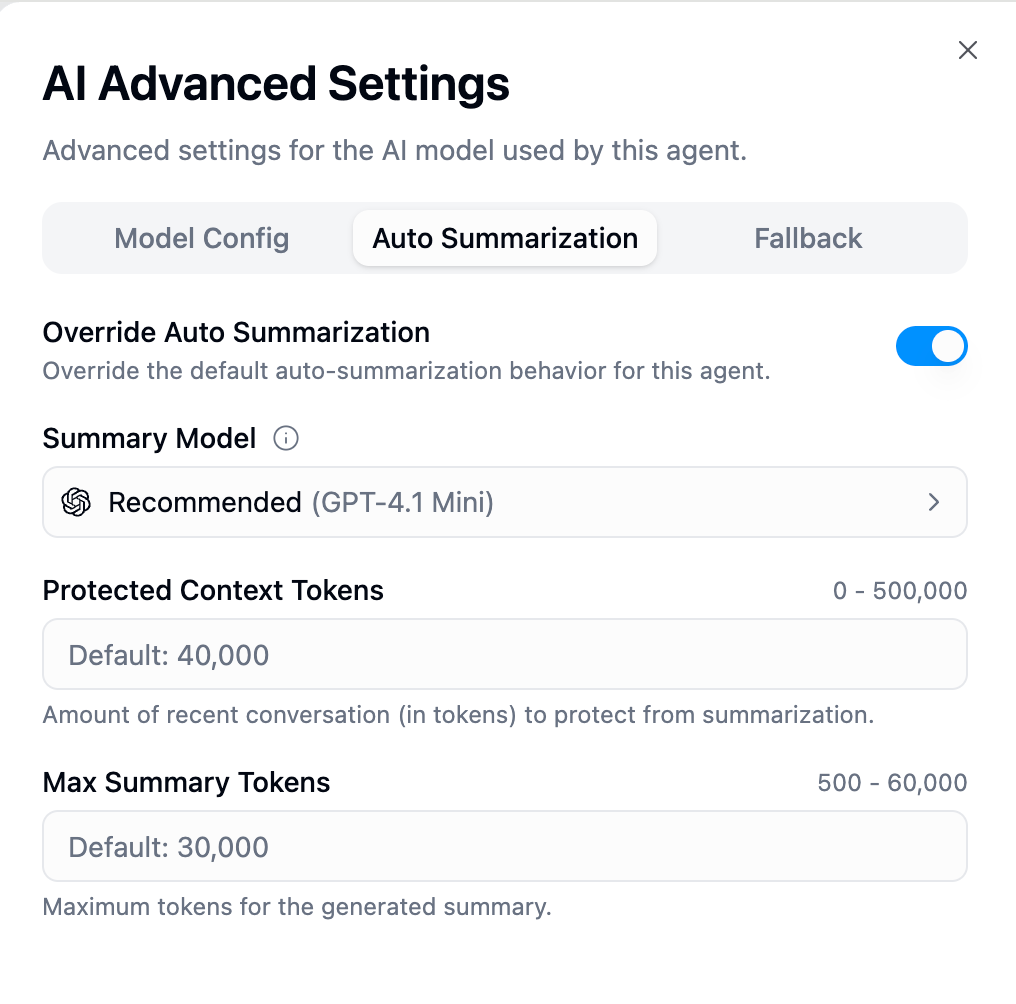

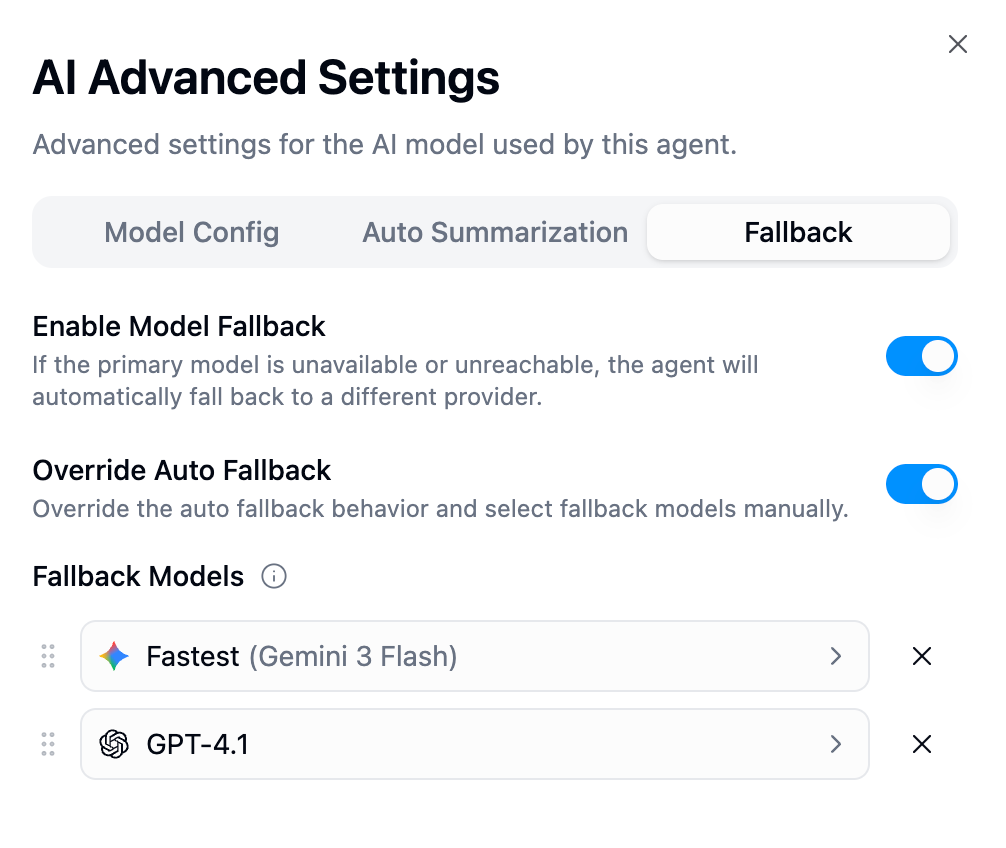

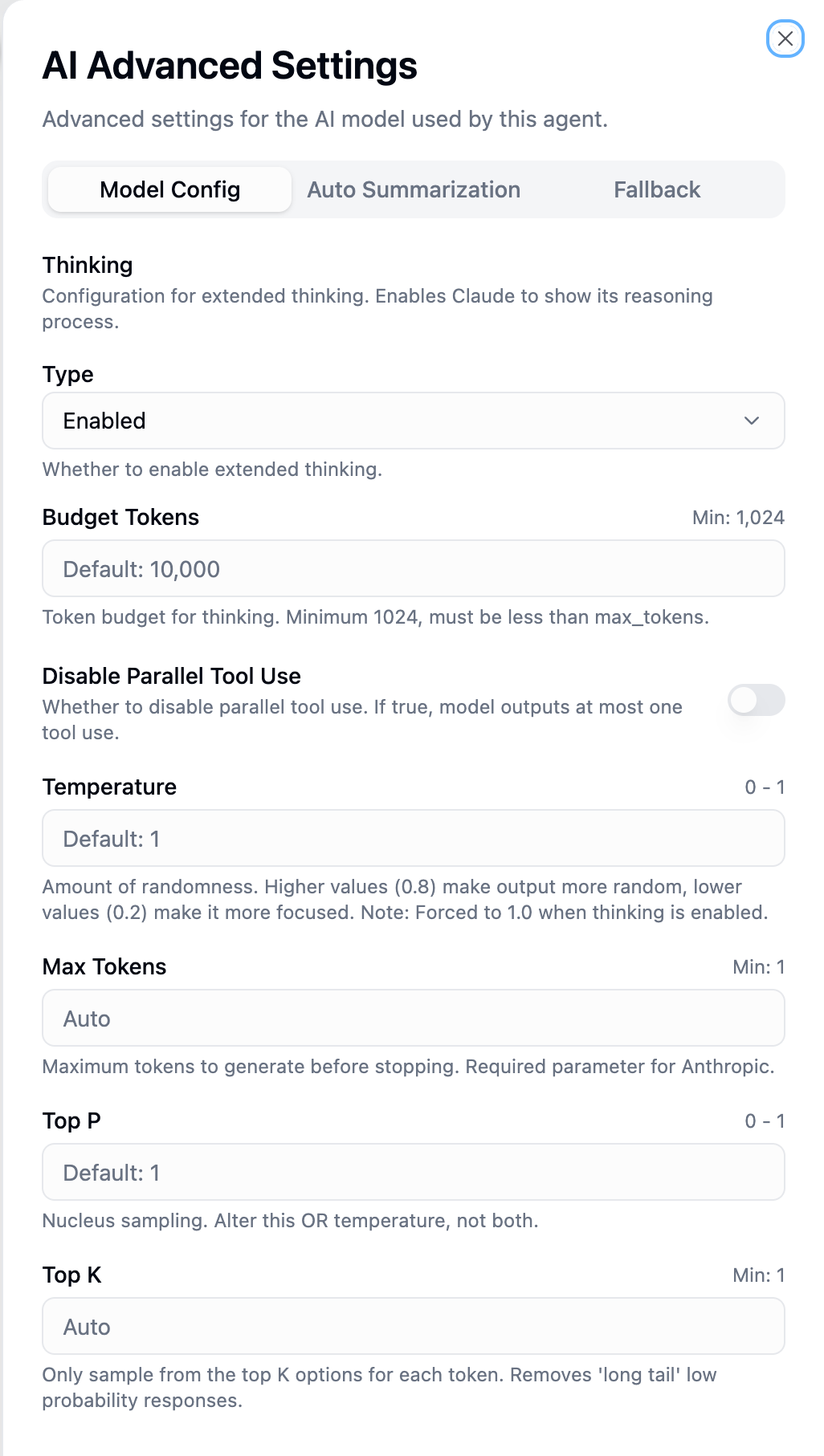

AI Advanced Settings

Fine-tune how your agent’s AI model behaves, manages long conversations, and handles failures. Access these settings by clicking the AI Advanced Settings button in your agent configuration.

- Model Config

- Auto Summarization

- Fallback

OpenAI Parameters

OpenAI Parameters

| Parameter | Range | Default | Description |

|---|---|---|---|

| Reasoning Effort | low, medium, high | medium | Controls computational effort before responding. Higher = more thorough reasoning but slower and more tokens. Only for o-series and GPT-5 models. |

| Temperature | 0–2 | 1 | Controls output randomness. 0 = deterministic, 2 = highly creative. |

| Max Output Tokens | 1+ | Auto | Upper bound for generated tokens (includes reasoning tokens). If unset, uses model default. |

| Max Tool Calls | 1+ | Auto | Limits total tool calls per response. Useful for cost control. |

| Top P | 0–1 | 1 | Nucleus sampling. Model considers only tokens in the top P probability mass. Only for GPT-4o, GPT-4.1, GPT-5.1, GPT-5.2, o3, o4. |

| Parallel Tool Calls | on/off | on | When on, model can execute multiple tools simultaneously. Disable if tools depend on each other’s results. |

Anthropic (Claude) Parameters

Anthropic (Claude) Parameters

| Parameter | Range | Default | Description |

|---|---|---|---|

| Extended Thinking | enabled, disabled | enabled | Shows Claude’s reasoning process before the final answer. When enabled, temperature is forced to 1.0. |

| Budget Tokens | 1,024+ | 10,000 | Token budget for thinking. Higher = more thorough reasoning but slower. Only shown when thinking is enabled. Must be less than Max Tokens. |

| Temperature | 0–1 | 1 | Controls output randomness. Note: forced to 1.0 when Extended Thinking is enabled. |

| Max Tokens | 1+ | Auto | Maximum tokens to generate. Defaults: 64,000 (Claude 4.5), 8,192 (older models). |

| Top P | 0–1 | 1 | Nucleus sampling threshold. |

| Top K | 1+ | Auto | Limits sampling to top K most likely tokens. Lower values (10–40) produce more focused outputs by removing long-tail responses. |

| Disable Parallel Tool Use | on/off | off | When on, model outputs at most one tool call per response. Enable for strict sequential execution. |

Google (Gemini) Parameters

Google (Gemini) Parameters

| Parameter | Range | Default | Description |

|---|---|---|---|

| Thinking Level | LOW, HIGH | HIGH | Controls depth of internal reasoning. LOW = faster responses, HIGH = deeper analysis. Only for Gemini 2.5 and 3 models. |

| Temperature | 0–2 | 1 | Controls output randomness. |

| Top P | 0–1 | 0.95 | Nucleus sampling. Note: Google’s default (0.95) is lower than other providers. |

| Top K | 1+ | Auto | Maximum tokens considered at each generation step. |

| Max Output Tokens | 1+ | Auto | Maximum tokens to generate. |

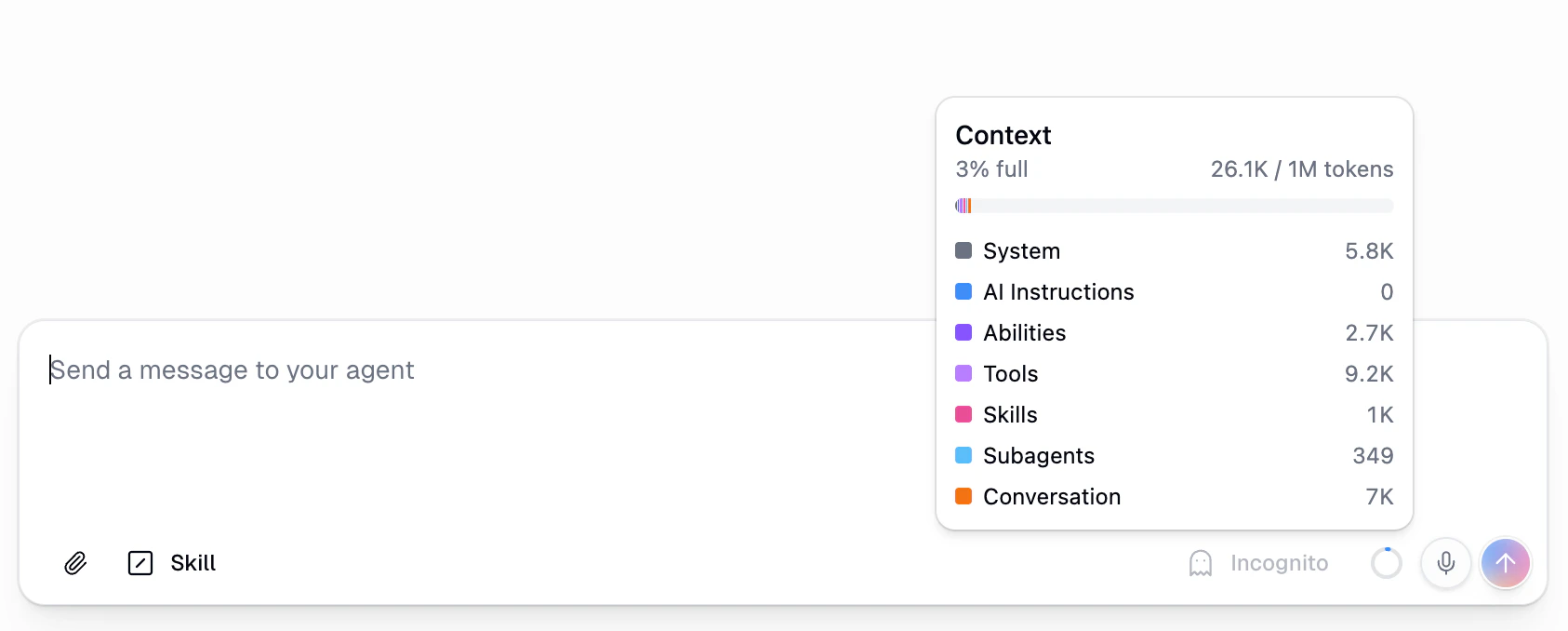

Context Usage Meter

The Context Usage Meter gives you real-time visibility into how much of your AI model’s context window is being used during a conversation. You’ll find it as a small circular icon in the bottom-right corner of the chat input area.

| Category | What It Includes |

|---|---|

| System | Gumloop’s internal system prompt that powers your agent |

| AI Instructions | The custom instructions you wrote for your agent |

| Abilities | Built-in capabilities like code execution and web search |

| Tools | MCP integrations and workflows you’ve connected |

| Skills | Any skills attached to the agent |

| Subagents | Token overhead from connected subagents |

| Conversation | The actual messages exchanged in the current chat |

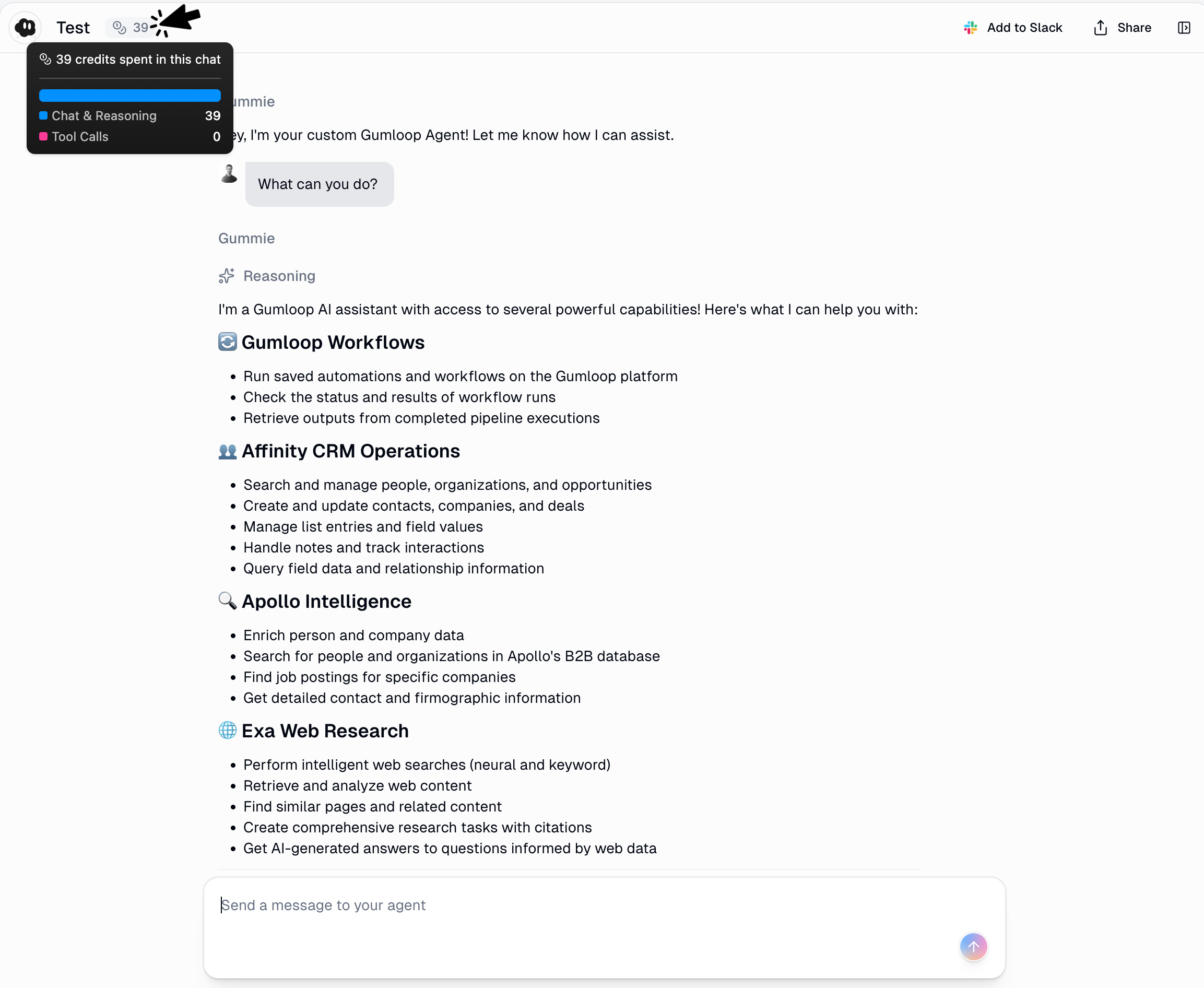

Understanding Credit Costs

Agents consume credits based on AI model usage, workflow executions, and integration operations.Model Pricing Overview

- Budget Models

- Advanced Models

- Expert Models

| Model | ~Cost per Message | Best For |

|---|---|---|

| GPT-4.1 Mini | 2-3 credits | Simple tasks, quick responses |

| GPT-5 Mini | 2-3 credits | General queries |

| Claude Haiku 4.5 | 2-4 credits | Fast interactions |

Workflow and Integration Costs

When agents call workflows or integrations, additional costs apply:| Node Type | Credit Cost | Examples |

|---|---|---|

| Free Nodes | 0 credits | Input/Output, Filter, Router, Most integrations |

| Low Cost | 2-3 credits | Ask AI (simple), Run Code, Custom Operators |

| Medium Cost | 10-30 credits | AI with large prompts, AI Vision |

| High Cost | 10-60+ credits | Data enrichment, Premium APIs |

- Free: Google Workspace, Slack, Airtable, Notion, Salesforce, HubSpot, 150+ more

- Paid: Data enrichment services (eg. Hunter.io ~10 credits, ZoomInfo ~60 credits)

Optimizing Credit Usage

Choose Appropriate Models

Keep Conversations Focused

Write Clear Prompts

Limit Tool Count

What Affects Credit Costs

What Affects Credit Costs

Tracking Credit Usage

- Real-time Display: Credits shown next to each agent response as it streams

- Conversation History: View total credits per conversation thread

- History Page: See credit costs for agent chats and Agent node executions (when run via workflows)

- Account Dashboard: Track total credits used across all agents and timeframes

Credentials & Authentication

When an agent uses integrations and workflows, it needs credentials to access external services. You can control which account each app uses from the agent’s configuration page.- Use Personal Default — Each person who runs the agent uses their own default account. This is the default.

- Use Team Default (team agents only) — Everyone on the team uses the same shared account.

- Use Specific Account — Pin a specific account for this agent. Useful when you have multiple accounts for the same service.

Personal Agents

Personal Agents

Team Agents

Team Agents

- Use Personal Default — same as personal agents, each person uses their own account

- Use Team Default — everyone on the team uses the same shared team account

- Use Specific Account — pin a specific team account for this agent

Setting Up Credentials

Setting Up Credentials

- Visit your credentials page

- Authenticate with required services (Gmail, Salesforce, etc.)

- Set credentials as your personal default

- The agent will notify you about missing authentication

- You’ll receive a link to the credentials page

- After authenticating, return and retry your request

Your Data Stays Private

Controlled Access

App Rules

App Rules let you put guardrails on the tools your agent can use. Instead of just toggling tools on or off, App Rules intercept individual tool calls and block or tag them based on conditions you define. For example, you can create a rule that prevents your agent from sending Slack messages to a specific channel, or blocks Linear ticket creation unless at least two labels are attached.Agent-Level Rules

Agent-level rules target a specific agent, so they only apply to tool calls made by that agent. There are two ways to create them. From the agent configuration panel: Open the detail view of any connected app in your agent’s config. The Rules tab shows all rules targeting this agent for that app. You can toggle rules on or off and click through to the rule detail sheet.

App Rules Documentation

Best Practices & Troubleshooting

- Best Practices

- Troubleshooting

Start Simple, Add Complexity

Start Simple, Add Complexity

Treat Agents as Work in Progress

Treat Agents as Work in Progress

- Review conversation history regularly for patterns

- When agents make mistakes, ask: “What could I add to your instructions to prevent this?”

- Document edge cases and add explicit handling rules

- Celebrate successful patterns and codify them in instructions

Design Workflows for Agent Use

Design Workflows for Agent Use

- Always use Input and Output nodes so agents understand parameters

- Keep workflows focused on single responsibilities

- Use descriptive names that indicate purpose

- Write clear descriptions explaining when to use the workflow

- Test workflows independently before giving them to agents

Set Clear Boundaries

Set Clear Boundaries

Monitor and Measure Performance

Monitor and Measure Performance

- Time saved: Hours saved per week through automation

- Success rate: Tasks completed successfully vs requiring intervention

- Tool usage: Which workflows/integrations are used most frequently

- Credit efficiency: Cost per completed task or interaction

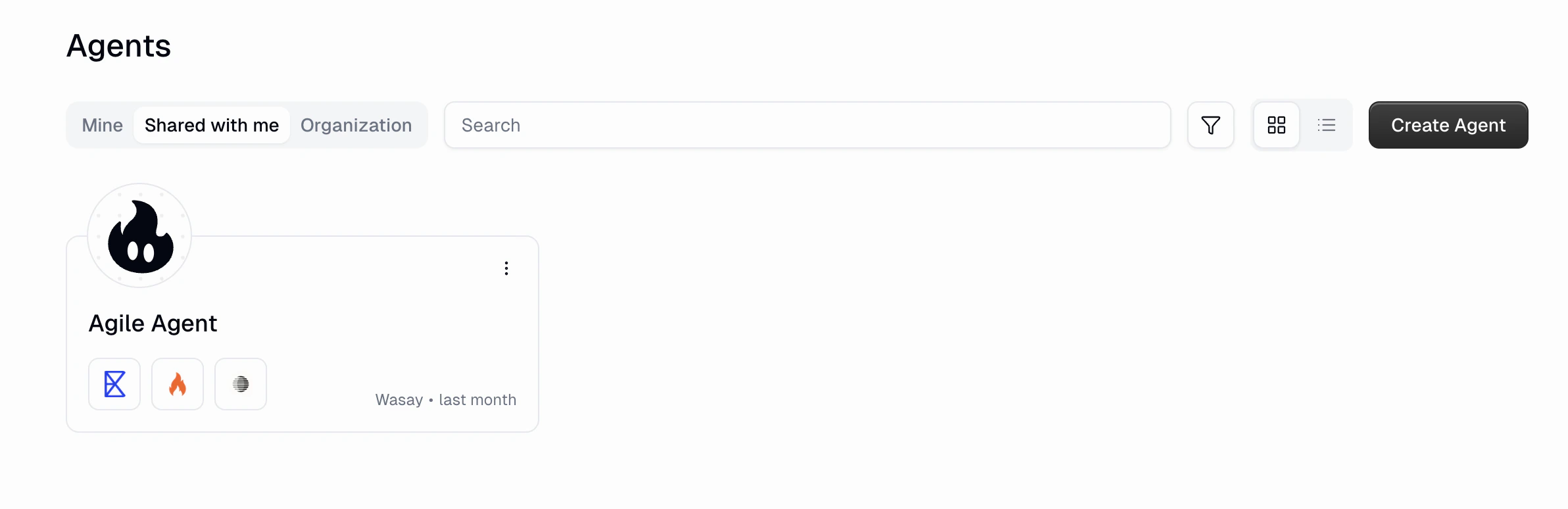

Finding Agents

The Agents page lets you browse all agents you have access to. Use the tabs at the top to switch between views:| Tab | What It Shows |

|---|---|

| Mine | Agents you created |

| Shared with me | Agents that others have shared with you directly or via your organization |

| Organization | All agents visible to your entire organization |

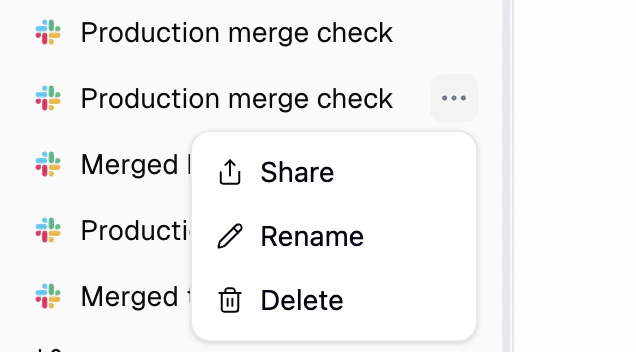

Managing Chats

Every conversation with your agent appears in the sidebar. You can rename, share, or delete any chat from the context menu. Right-click a chat (or click the three-dot menu) to see your options:

| Action | What it does |

|---|---|

| Share | Share the conversation with others as a read-only link |

| Rename | Give the chat a custom name so you can find it later |

| Delete | Permanently remove the conversation |

General Preferences

Follow-Up Prompts

Suggest follow-up prompts after each response for the user to continue the conversation. Found in General Preferences in your agent’s settings. Enabled by default. Disable it if you don’t want suggested next steps shown after each agent response.

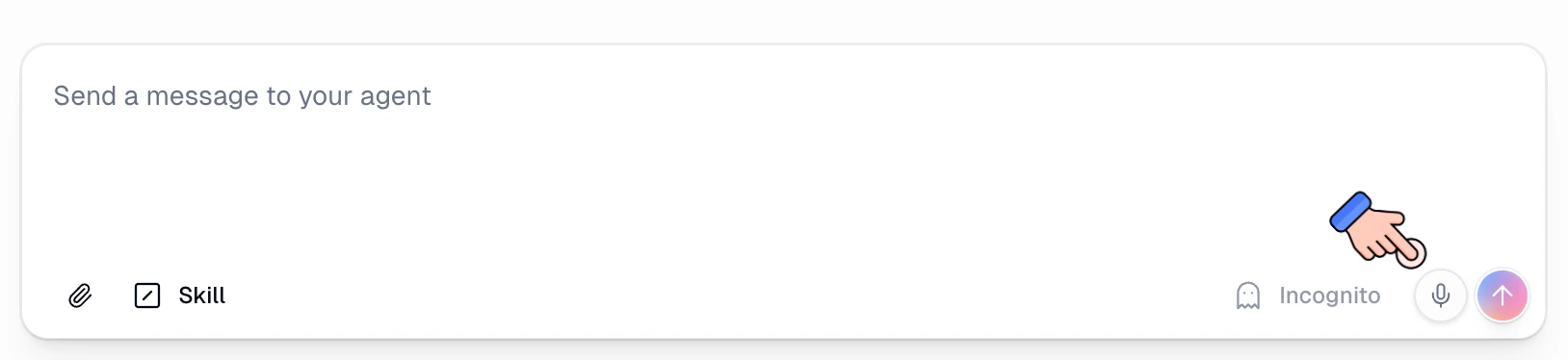

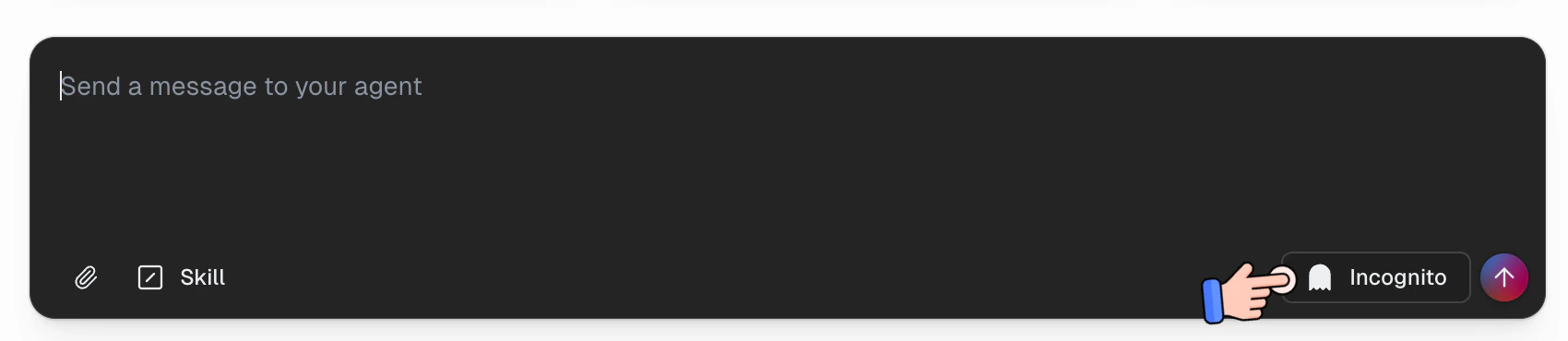

Incognito Mode

Incognito mode lets you have conversations with an agent that are not saved to the database. Messages are held only in temporary memory during the conversation and are automatically deleted after 24 hours.

How to Use Incognito Mode

Toggle the Incognito button in the chat input bar before sending your message. When incognito is active, the conversation bypasses permanent storage entirely.What Happens in Incognito Mode

| Behavior | Standard Chat | Incognito Chat |

|---|---|---|

| Message storage | Saved permanently to the database | Not saved to the database |

| Visible in chat history | Yes | No. Hidden from the sidebar, search, and all chat listings |

| Included in data exports | Yes | No |

| Files and artifacts | Stored permanently | Stored in ephemeral storage and auto-deleted after 24 hours. No permanent artifact records are created |

| Subagent behavior | N/A | Incognito propagates to all subagents automatically |

| Used for self-improvement | Yes, agent can learn from interactions | No, incognito conversations are excluded from reflections |